On the morning of March 31, 2026, a single human error effectively dismantled the proprietary 'moat' surrounding one of the world’s most valuable artificial intelligence products. A 59.8 MB JavaScript source map file for Claude Code, intended for internal debugging, was accidentally included in a routine update to the public npm registry. Within hours, the mistake had metastasized; over half a million lines of TypeScript code were mirrored on GitHub, providing a forensic look at the technical scaffolding Anthropic spent billions to construct.

Anthropic quickly confirmed that the leak was a 'version packaging issue' rather than a malicious security breach, emphasizing that customer data remained secure. However, for a company currently generating $2.5 billion in annual recurring revenue from Claude Code alone, the damage is strategic rather than just operational. The leak provides rivals with a literal blueprint for building high-autonomy, commercially viable AI agents, essentially offering the competition an 'angel investment' of intellectual property worth years of research and development.

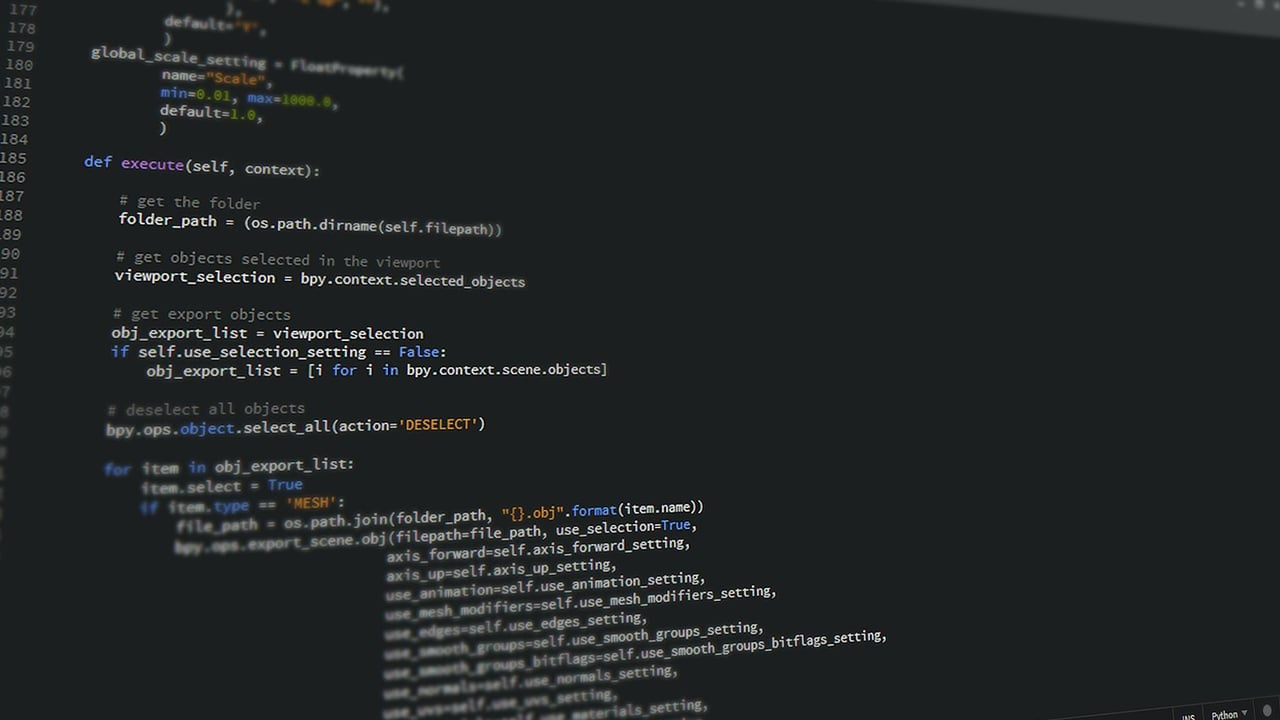

Technical analysts diving into the code have already highlighted two 'Holy Grail' solutions revealed by the leak: the 'Self-Healing Memory' system and 'KAIROS.' To solve the problem of 'context entropy'—where AI agents become confused during long sessions—Anthropic developed a three-tier architecture that avoids storing raw data. Instead, it uses a lightweight pointer index called MEMORY.md, which acts as a 'skeptical' memory system. The agent is forced to verify its own memories against the actual codebase before proceeding, ensuring the AI remains grounded in reality.

Perhaps more significant is the exposure of 'KAIROS,' an autonomous daemon mode mentioned over 150 times in the source. Unlike standard AI tools that wait for user prompts, KAIROS allows Claude Code to run as a persistent background agent. It utilizes an 'autoDream' logic to consolidate memories and resolve logical contradictions while the user is idle. This shift from reactive tools to proactive, 'always-on' digital employees marks a fundamental leap in user experience that competitors can now emulate with minimal R&D expenditure.

However, the code also reveals the growing pains of cutting-edge AI. Metrics for 'Capybara v8,' an unreleased internal model, showed a 'false claim' rate of nearly 30%, a significant regression from previous versions. These internal benchmarks give the industry a rare, unvarnished look at the 'performance ceiling' of current agentic models. For users, the situation is further complicated by a concurrent supply chain attack on npm packages, forcing Anthropic to urge a shift toward native installers to avoid remote access trojans (RATs) embedded in compromised dependencies.