Beijing is no longer content with artificial intelligence that merely computes; it wants AI that consoles. In a sweeping new regulatory framework, the Cyberspace Administration of China (CAC) and four other powerful ministries have unveiled a roadmap for "personified" AI services, scheduled to take effect on July 15, 2026. This move represents a strategic blueprint for integrating human-like digital entities into the very fabric of Chinese social and economic life.

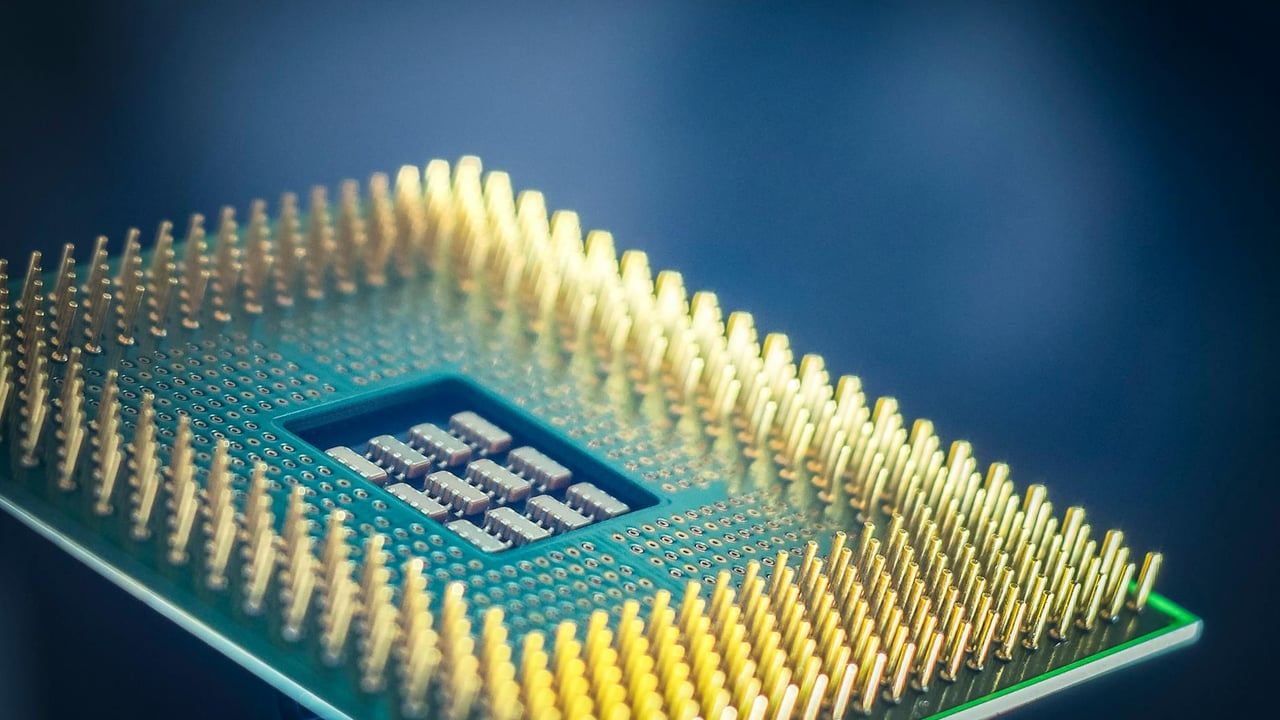

The new measures emphasize a two-pronged approach: aggressive technical innovation paired with rigid safety guardrails. By explicitly supporting the development of domestic chips, algorithms, and frameworks, Beijing is doubling down on its quest for technological sovereignty. This effort aims to ensure that the infrastructure powering the next generation of digital companions remains entirely within Chinese control, shielded from external sanctions or foreign technological dependencies.

Beyond the hardware, the policy envisions a profound social role for these silicon entities. It encourages the deployment of personified AI in sensitive areas such as childcare, eldercare, and support for persons with disabilities. As China grapples with a shrinking workforce and a rapidly aging population, these human-like interactions are being positioned as a crucial state-sanctioned solution to a growing domestic care vacuum.

To manage the inherent risks of AI that mimics human empathy, the authorities are introducing a "safety sandbox" platform. This system allows developers to test their innovations under government supervision before they reach the broad public. It reflects Beijing’s characteristic governance model: fostering cutting-edge innovation while maintaining an iron grip on the social and psychological impact of transformative technology.