At the 2026 Google Cloud Next conference, CEO Sundar Pichai unveiled a staggering escalation in the company’s technological ambitions. Google is projecting capital expenditures to reach between $175 billion and $185 billion this year, a nearly sixfold increase from 2022 levels. This massive investment underpins a strategic pivot toward what Google calls the 'Agentic Enterprise'—a shift from simple AI assistants to autonomous agents capable of executing complex business workflows.

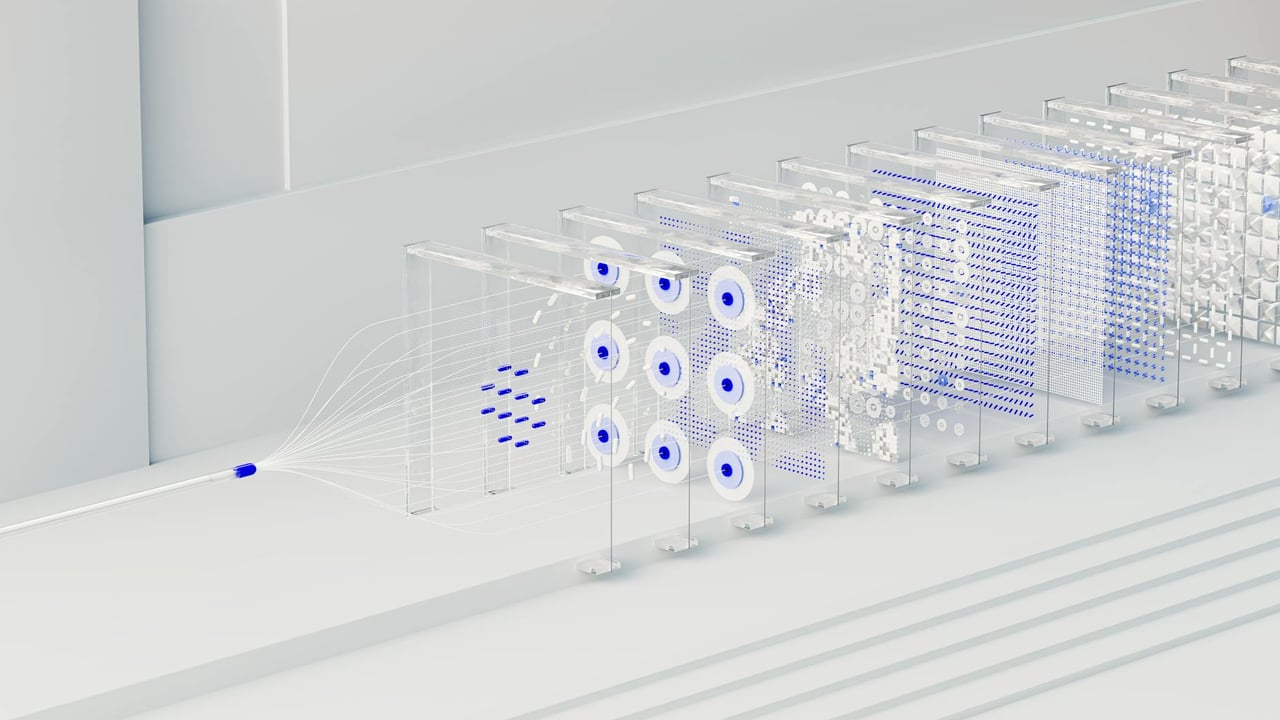

The hardware foundation of this shift is the eighth-generation Tensor Processing Unit (TPU), now strategically bifurcated into two specialized models. The TPU 8t is optimized for heavy-duty AI model training, featuring 121 ExaFlops of compute power and a resilient architecture that utilizes Optical Circuit Switching (OCS) to automatically reroute around hardware failures. Meanwhile, the TPU 8i is specifically engineered to break the 'memory wall,' the data-access bottleneck that often hampers AI performance, by utilizing a massive 288GB of high-bandwidth memory and tripled on-chip SRAM.

Infrastructure efficiency remains a core differentiator for Google, which revealed that these new chips are supported by its fourth-generation liquid cooling technology. By integrating these units with the custom-designed Axion ARM CPU platform, Google claims an 80% improvement in price-performance for inference tasks. This vertical integration—from the cooling pipes to the silicon architecture—is designed to provide a proprietary moat against rivals who remain more dependent on third-party hardware providers.

On the software front, the new 'Gemini Enterprise' platform and 'Knowledge Catalog' represent a move toward deeper data intelligence. Unlike traditional data directories, these tools use AI to map unstructured data and complex business relationships natively. This allows 'Deep Research Agents' to perform tasks that previously required weeks of human effort, such as auditing regional finances or identifying root causes in supply chain disruptions, by accessing data even if it resides on competing platforms like AWS or Microsoft Azure.

Finally, Google is making an aggressive play for its competitor's market share with new enterprise migration tools. The company announced a 'Rapid Enterprise Migration' feature that reportedly moves organizations from Microsoft 365 to Google Workspace five times faster than previous methods. With 75% of Google’s own new code now being AI-generated, the company is positioning itself as the primary case study for the efficiency gains it promises to its global enterprise clients.