Chinese AI pioneer SenseTime has officially launched and open-sourced its latest breakthrough, the 'SenseNova U1' series. This new unified model represents a significant technical pivot, moving away from fragmented AI systems toward a singular architecture that handles multi-modal understanding, reasoning, and content generation simultaneously.

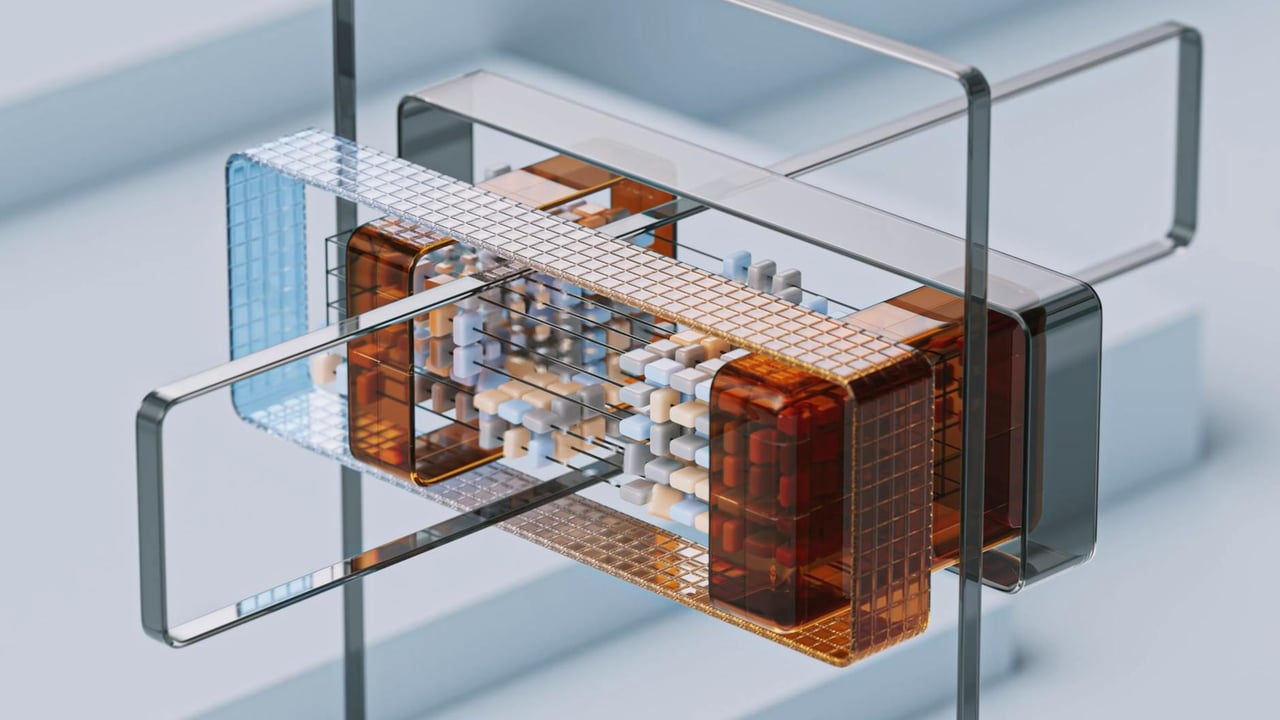

Built upon the company’s proprietary NEO-unify architecture, SenseNova U1 is designed to be 'native' in its multi-modality. Unlike previous iterations that often stitched together separate vision and language modules, this unified approach allows for more fluid transitions between processing visual data and generating complex logical inferences.

The decision to open-source SenseNova U1 is a strategic maneuver within China’s hyper-competitive AI landscape. By lowering the barrier to entry for developers, SenseTime aims to build a robust ecosystem around its 'SenseNova' platform, directly challenging the dominance of domestic rivals like Alibaba and international open-source leaders like Meta.

This release underscores a broader trend in the global AI race where the focus is shifting from simple text-based Large Language Models (LLMs) to Large Multi-modal Models (LMMs). SenseTime’s emphasis on 'unified' capabilities suggests a move toward more versatile AI agents capable of operating across diverse industrial and consumer applications.