Nvidia delivered a blockbuster quarter — $68.1 billion in revenue, well above expectations — and still saw its share price slip. After a modest intraday rise, the stock fell sharply the following session, erasing roughly $260 billion of market value. The episode underscores a new market mood: stellar sales no longer guarantee uninterrupted valuation growth when investors fear the company’s competitive edge is narrowing.

The company’s recent price action followed a flurry of sentiment swings: a rumor that Nvidia might halt investment in OpenAI knocked shares down, only for CEO Jensen Huang’s televised assurance that AI infrastructure lies “seven to eight years” from maturity to spark a rebound. The contrast between headline narratives and hard numbers — a supreme quarter but a severe re-rating — is now central to how Wall Street assesses the firm.

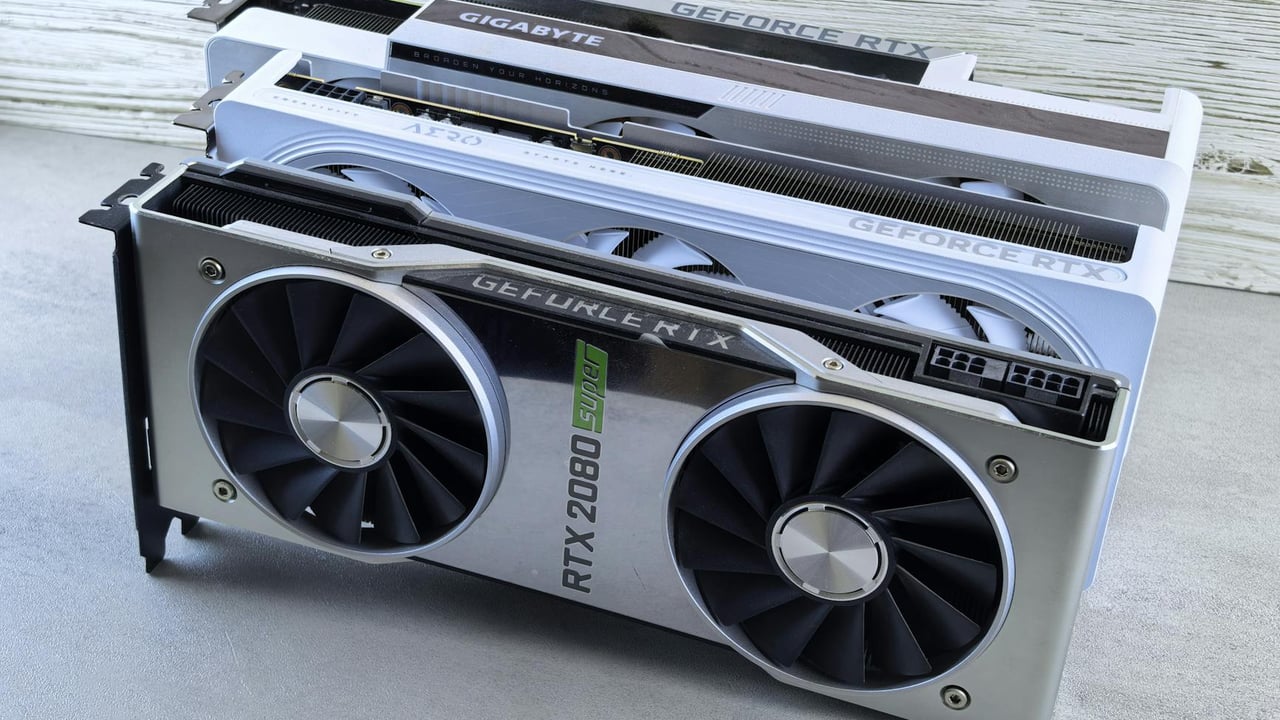

Nvidia’s rise is the product of repeated, concentrated bets. The firm moved from a niche graphics-chip supplier to the de facto supplier of AI compute by marrying hardware with a developer ecosystem — CUDA — and by seizing two successive tailwinds: the cryptocurrency mining boom and the explosive demand for large-model training. Historical inflection points, from the RIVA 128 gamble in the 1990s to AlexNet’s breakout in 2012, show a pattern of all‑in decisions that created an outsized market payoff.

That payoff rested on a simple scarcity: for several AI cycles, GPUs offered the best combination of performance, software ecosystem and developer familiarity. But scarcity is relative. As demand for AI compute ballooned, competitors and cloud providers began designing alternative silicon and vertically integrating stacks to cut costs and tighten control over margins.

The technical dynamics of model development are shifting too. The gains from ever-larger pre‑training runs are slowing; recent measures of model performance dispersion show top models closing the gap with their nearest rivals. As the industry moves from one-off, CapEx‑heavy training events to continuous, latency‑sensitive inference workloads, customers grow more price-conscious about per‑token costs and less willing to pay training‑era premiums.

That shift exposes Nvidia to two pressures. First, inference economics favour different architectures and deployment models — custom ASICs, TPUs and cloud‑native accelerators can be cheaper at scale. Counterpoint’s forecast that cloud providers’ ASIC shipments could overtake GPU shipments by 2028 captures that risk. Second, the uniqueness of Nvidia’s advantage — ubiquitous CUDA code and a rich software stack — may erode as competitors improve toolchains and as labour costs and ecosystem lock‑in shift.

Nvidia’s actions reflect this anxiety. The company has pushed packaging and networking innovations, notably promoting co-packaged optics (CPO) to tie high‑speed interconnects to its systems, and has begun to squeeze incremental margin from components such as optical modules. It has also navigated delicate geopolitics: a new US export decision cleared H200 sales to China under restrictive conditions, while the company has set aside a $4.5 billion inventory reserve for a China‑specific, reduced‑function H20 part and excluded any Chinese data‑centre revenue from near‑term guidance.

At the same time Nvidia is chasing adjacencies. Its BioNeMo framework and a headline partnership with Eli Lilly — a joint innovation lab funded up to $1 billion over five years — signal a push into drug discovery compute. Such verticals offer growth but are long‑horizon and capital‑heavy; they are unlikely to offset near‑term margin pressure from the core data‑centre business.

The company’s near‑term narrative will hinge on its March GTC event, where Huang promises “unseen” silicon and where observers expect announcements on tighter GPU+ASIC integration. That product signalling matters: Nvidia can preserve premium pricing if it demonstrably extends superior price‑performance, but the market is less tolerant of incrementalism now that multiple credible alternatives exist.

Nvidia is unlikely to collapse. Its installed base, software library and partner relationships give it durable strength. But the era when a general‑purpose GPU plus CUDA guaranteed monopoly‑like capture of AI surplus is likely passing. For investors, customers and competitors, the question has moved from whether Nvidia will win to how it will share and defend a smaller slice of a far bigger AI market.