# GPUs

Latest news and articles about GPUs

Total: 14 articles found

Nvidia Pushes ‘One‑Line’ Agent Deployment with NemoClaw to Cement GPU‑centric AI Ecosystem

At GTC, Nvidia introduced NemoClaw, a two‑command deployment toolchain optimized for the open‑source agent framework OpenClaw, aiming to bind GPU servers tightly to agent runtimes. The move continues Nvidia’s strategy of using software to drive hardware adoption and raises questions about portability, vendor lock‑in and standards in the rapidly growing agent ecosystem.

Nvidia Targets the ‘Inference’ Bottleneck with a New Generation of AI Chips

Nvidia is designing a new class of chips optimized for AI inference, prioritizing latency, throughput and energy efficiency for real‑time model serving. The move aims to lower the cost of running large models at scale and strengthens Nvidia’s position across the AI value chain while intensifying competitive and geopolitical pressures in the semiconductor industry.

When Beats Don’t Boost: Why Nvidia’s Record Quarter Prompted a Market Rethink

Nvidia posted another extraordinary quarter, yet its stock tumbled as investors worried the company’s GPU advantage is shrinking. Structural shifts — from training to inference, rising alternative silicon and cloud providers’ vertical integration — are compressing margins and forcing Nvidia to seek new revenue streams while defending its core franchise.

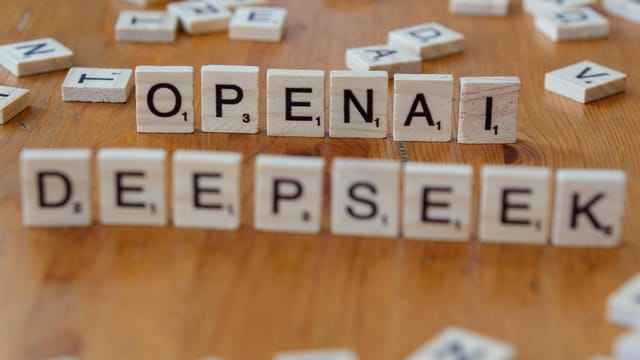

Blowout Quarter, Tepid Market: Nvidia’s Strong Results Undercut by AI‑Monetisation Fears and an Uncertain OpenAI Pact

Nvidia posted a stellar quarter driven by its data‑centre GPUs and issued an aggressive revenue guide, yet the stock fell over 5% as investors fretted about the sustainability of AI capex, lofty valuations and uncertainty around a large potential OpenAI investment. The rout hit other chip names too, reflecting concern about demand sensitivity to hyperscaler spending and a market shift from training to inference workloads.

Huang’s Rule: In the AI Era, Compute Is Money — Nvidia Maps Out a Cash-Driven Infrastructure Monopoly

Nvidia used its strong quarterly results to reframe the economics of AI around one simple truth: compute generates tokens, and tokens generate revenue. Jensen Huang argued that the company’s architectural compatibility, software ecosystem and new networking products make Nvidia the indispensable infrastructure provider for the agent‑based AI era, while flagging longer‑term bets in robotics and space computing.

Nvidia and Meta Forge Multi‑Year AI Partnership as Meta Orders Millions of Chips

Nvidia and Meta have signed a multi‑year partnership that will see Meta deploy millions of Nvidia chips across on‑premises and cloud infrastructure. The deal secures compute supply for Meta's AI ambitions while reinforcing Nvidia's dominant position in AI hardware, with wide implications for competitors, cloud providers and energy use.

Token Tsunami and Power Limits Propel a Boom in Liquid‑Cooled AI Servers

Exploding AI token consumption and rising hardware costs are driving a surge in rented AI compute and accelerating adoption of liquid‑cooled, high‑density servers. Policy limits on data‑centre energy efficiency and the shift from training to widespread inference are making immersion cooling and edge deployment central to scaling AI affordably and sustainably.

Musk Recasts xAI as Four-Part Powerhouse — From Grok to 'Macrohard' and a Moon-Built Future

Elon Musk has reorganised xAI into four focused product teams — Grok (core model), Grok Code, Grok Imagine, and Macrohard (digital agents) — while pressing an ambitious plan to scale compute through terrestrial clusters and lunar manufacturing. The restructure follows co‑founder departures and a SpaceX acquisition that folded xAI into a larger, capital‑intensive space and social‑media strategy.

Musk Warns of an AI Power Crunch — and Suggests Moving GPU Farms to Space

Elon Musk warned that skyrocketing GPU production could outpace electricity supply, potentially leaving large AI clusters unable to power up. He suggested that space-based data centres might become economically attractive if terrestrial power capacity fails to keep pace, a claim that highlights broader tensions between AI compute demand and grid capabilities.

Musk Says Space Will Be the Cheapest Place to Run AI Within Three Years — Here’s Why That Would Upend the Cloud

Elon Musk told a podcast that within 30–36 months running large AI clusters in space will be far cheaper than on Earth, arguing terrestrial power constraints, grid bottlenecks and supply‑chain limits make orbital solar arrays economically superior. He cited higher energy yield from space solar, lower need for batteries, and simpler approvals versus terrestrial PV, while acknowledging engineering and regulatory hurdles remain.

Huang Dials Down $100bn OpenAI Talk — Nvidia Says Any Funding Will Be Evaluated 'Round by Round'

Jensen Huang said Nvidia never committed to a $100 billion investment in OpenAI and will evaluate any funding opportunity incrementally. The clarification reduces short‑term market uncertainty and signals Nvidia’s preference to remain a broadly neutral supplier rather than take on outsized financial exposure to a single AI lab.

Huang Says Nvidia Will Join OpenAI’s New Fundraise — But $100bn Claim Is Off the Table

Jensen Huang confirmed Nvidia will participate in OpenAI’s current fundraising round but denied the company would invest anywhere near $100 billion, a figure that had been reported earlier. His comments aim to reassure markets that Nvidia–OpenAI ties remain strong while tempering expectations about the scale of Nvidia’s financial commitment.