The AI hardware race has quietly flipped. Where cloud buyers once compared GPU teraflops, procurement conversations in 2026 increasingly revolve around how much high-bandwidth memory (HBM) a card carries and how wide its interface is — a shift industry insiders sum up as “buy memory, get the core for free.” Engineers and supply-chain managers now say HBM and advanced packaging (CoWoS) can account for the single largest line items on a high-end accelerator bill of materials, eclipsing the GPU die itself.

That change is a direct consequence of how large models are applied today. Since the ChatGPT breakthrough of 2023, model sizes ballooned and deployment patterns evolved: beyond pure training, inference workloads driven by agents and long-context use cases have become mainstream. These applications increase two distinct forms of memory demand — persistent storage for model weights and volatile, low-latency caches (KV Cache and activations) sized to support sessions measured in hundreds of thousands to a million tokens.

HBM is attractive because it integrates stacked DRAM very close to the compute die with wide buses, dramatically raising effective bandwidth and reducing latency compared with traditional off-package memory. But that performance comes at a price. Advanced 2.5D/3D packaging such as CoWoS introduces its own costs and yield challenges. One vendor example cited in this reporting shows HBM and CoWoS together making up roughly 80% of the manufacturing cost of a high-end inference/training card, with the GPU logic chip representing a surprisingly small share.

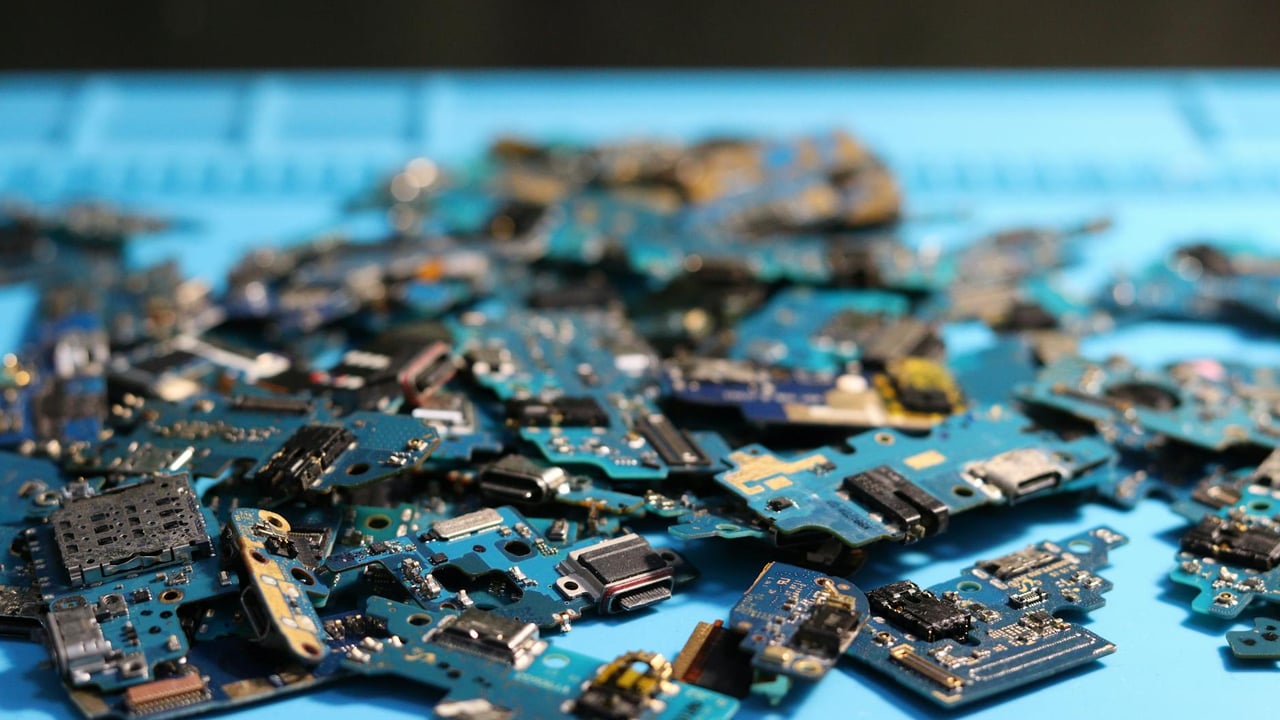

On the supply side, manufacturers have responded by reallocating DRAM capacity. Samsung, SK Hynix and Micron have diverted significant wafer starts toward HBM and high-performance DDR5 to meet cloud and AI demand, reducing output for consumer GDDR and commodity DRAM. The market effect is visible: memory spot prices surged from mid‑2025 and remained elevated into 2026, and consumer graphics cards and mobile devices are already feeling the pinch as foundries prioritise high-margin HBM production.

Short of an abrupt demand reversal, additional fab capacity — even the upcoming Samsung P4L and SK Hynix M15x lines intended for HBM and high-end DDR — will primarily temper further price escalation rather than deliver steep discounts. Data-centre buyers and AI cloud operators will be first in line for new HBM wafers, while consumer markets are likely to see relief sooner but only if vendors reallocate capacity back to commodity parts. For Chinese AI-chip makers the imbalance presents both a challenge and an opening: with HBM scarce and costly, Chinese teams are doubling down on system-level and software engineering — low-bit quantisation, expert-selection loading, KV-cache optimisation and other deployment tricks — to reduce memory footprints and improve effective throughput.

The strategic implications are broad. Cloud economics have shifted: providers may prefer more memory-rich instances even if raw compute per dollar is lower, while chipmakers are pushed to rethink designs around memory capacity and bandwidth rather than peak FLOPS. The concentration of HBM supply with a handful of vendors creates geopolitical and commercial risk, increasing incentives to pursue alternative architectures, memory-efficient models, and domestic upstream investments in packaging and DRAM for actors looking to secure their AI roadmaps.